Dheeraj Jha

Jun 29, 20205 min read

Dheeraj Jha

May 3, 20204 min read

Dheeraj Jha

Apr 25, 20204 min read

Dheeraj Jha

Mar 13, 20202 min read

Dheeraj Jha

Mar 4, 20203 min read

Updated: May 1, 2020

Hey folks,

Thanks for coming back again.

In the last couple of my blogs, I discussed thread creation, synchronization technique(mutex), performance issue with mutex and solved that using condition variable. (check out my previous blogs here.) But still, there is a couple of performance issue which mutex has.

We will discuss that in this article.

Let's assume, we have a system where we have one producer and multiple consumers. The producer will acquire a mutex lock and produce data and unlock the mutex. Let's assume Producer is waiting for some event to happen after that it will update existing/produce new data.

We have multiple consumers is our system who are acquiring mutex lock on data and reading data and releasing mutex lock. For simplicity, let's assume we have 2 consumer threads.

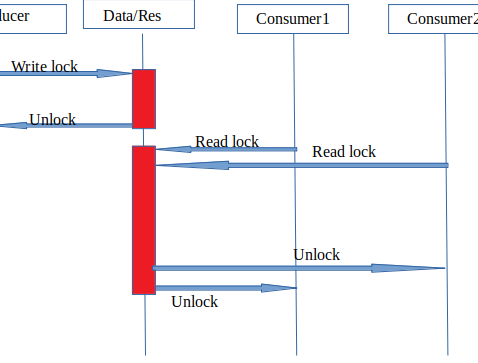

Below diagram explains how the lock will work between multiple consumer threads.

In this diagram, anyone can see that Producer will take the lock to produce data which is good. Problem is on the consumer side.

In the case of consumers, no consumer is going to update any data. The consumer will take a lock on resource read data and release the lock. Each consumer is going to wait to get mutex to unlock so that consumer can acquire the lock.

Sounds good. No problem here.

Now assume, each thread acquires the lock for 1 second, doing some processing and releasing the lock and we have such kind of thousands of threads. I hope now it is a problem, a performance issue. This is a read contention problem with an exclusive lock.

To solve this kind of issue, c++14 provide shared_timed_mutex and c++17 provides shared_mutex.

shared_mutex : provides shared mutual exclusion facility.

shared_timed_mutex : provides shared mutual exclusion facility and implements locking with a timeout.

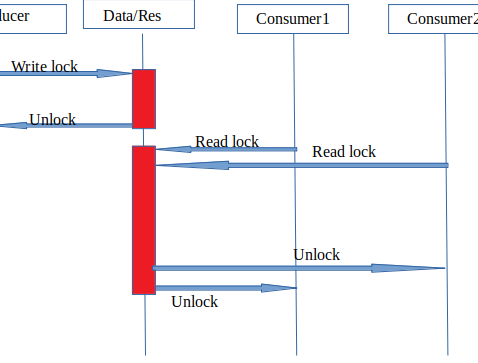

Let's see how the above situation will look like with shared_timed_mutex.

In this case, also Producer will acquire the unique lock(unique_lock<shared_timed_mutex>) and update the resource. In case of Consumer will acquire shared lock(shared_lock<shared_timed_lock>). In case of shared mutex lock, any consumer can acquire the lock if none of the thread has acquired unique lock on a resource.

The benefit of this is, consumer/reader threads need not wait for other threads to release lock to read. So all consumers can work parallel.

Let's try to simulate this situation.

Source code is here.

When I run this code as it is using a mutex, I got below output.

I commented line number 11, 29, 34, 41, 46

un-commented line number 10, 30, 35, 42, 47

After executing output was...

You can see the significant difference in total run time. Consider if you have thousands of reader thread, what will be the performance.

There is one more issue in a multi-threaded environment. Let's say a thread acquires a lock on a resource and crashed. There is a beautiful feature introduced in c++11/14 to handle these kinds of situation. I will discuss that in the next blog.

I hope this I am clear to explain the requirement of shared_mutex(Read-Write mutex). Let me know if you have any suggestion/improvement. I will be happy to address those.

Comments